Many product teams assume that literal translation equals accurate localization, but this misconception often leads to poor user experiences and costly rework. True translation accuracy encompasses cultural nuance, contextual meaning, and seamless workflow integration across design, development, and content creation. This comprehensive guide explores the metrics, methodologies, and practical strategies that product managers, UX writers, and developers need to enhance translation accuracy and streamline localization workflows. You’ll discover how to measure quality effectively, balance speed with precision, overcome linguistic challenges, and leverage modern tools to deliver globally resonant products that maintain brand voice across every market.

Key Takeaways

Point | Details |

|---|---|

First time quality | Track first time quality along with error rate, EPT, TTE, turnaround time and CSAT to measure translation accuracy and workflow health. |

Hybrid MTPE benefits | Hybrid machine translation with post editing speeds up work and improves quality compared with pure human translation. |

Adaptive translation APIs | Adaptive or context aware translation APIs adjust output based on content type and audience to preserve tone and terminology. |

Culture and workflow integration | Cultural and linguistic nuances must be addressed and translations must blend with design, development, and content creation workflows. |

Understanding translation accuracy metrics for teams

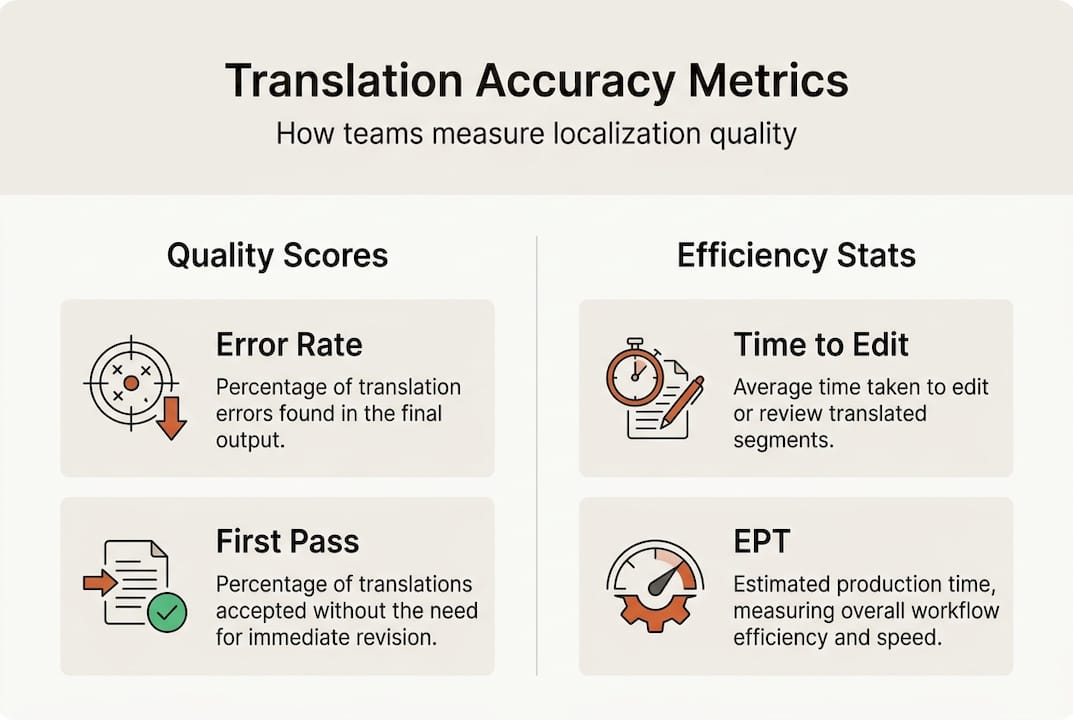

Product teams need concrete ways to measure and benchmark translation quality beyond subjective impressions. Key metrics for localization teams include first-time quality, error rate, EPT, TTE, turnaround time, and CSAT, providing a comprehensive view of both linguistic precision and workflow efficiency. First-time quality measures the percentage of translations that require no corrections after initial delivery, directly reflecting the effectiveness of your translation process and team coordination. Error rate tracks the frequency of mistakes per project, helping teams identify patterns and training needs across different language pairs or content types.

Two specialized efficiency metrics deserve particular attention. Errors Per Thousand words (EPT) quantifies mistake density, allowing teams to compare quality across projects of different sizes and establish realistic benchmarks for various content types. Time to Edit (TTE) measures how long human reviewers spend correcting machine or initial human translations, revealing whether your hybrid workflows actually save time or create additional friction. These metrics together paint a picture of whether your localization approach delivers both quality and velocity.

Beyond pure linguistic measures, operational metrics reveal how translation accuracy affects your broader product development cycle. Turnaround time shows whether localization becomes a release blocker, while customer satisfaction scores (CSAT) from users in different markets indicate whether your translations truly resonate. Understanding software localization impact helps teams connect these metrics to business outcomes like user retention and market penetration.

Metric | Definition | Typical benchmark |

|---|---|---|

First-time quality | Percentage requiring no corrections | 85-95% for mature workflows |

Error rate | Mistakes per project | Under 2% for professional work |

EPT | Errors per thousand words | 1-3 for high-quality output |

TTE | Hours spent on corrections | 20-30% of initial translation time |

Turnaround time | Days from request to delivery | 1-3 days for typical UI strings |

CSAT | User satisfaction by market | 4+ out of 5 in localized regions |

Pro Tip: Regularly review these metrics with your team to identify workflow bottlenecks early, adjusting processes before quality issues compound across multiple releases.

Proven methodologies for improving translation accuracy

Once you understand what to measure, the next question becomes how to actually achieve those quality targets efficiently. Hybrid Machine Translation Post Editing (MTPE) has emerged as the dominant approach for product teams, combining the speed of AI-generated translations with human expertise to catch nuance and context. Hybrid MTPE and adaptive APIs save 30-50% time while improving quality compared to pure human translation, making it possible to maintain velocity without sacrificing accuracy. This methodology works particularly well for UI strings and help documentation where consistency and speed matter more than creative flourish.

Adaptive or context-aware translation APIs take this further by adjusting output based on content type and audience. A casual marketing headline requires different treatment than a technical error message, even when translating the same source phrase. Modern APIs can recognize these distinctions and apply appropriate formality levels, terminology preferences, and stylistic conventions automatically. For example, a UX button label might prioritize brevity and action orientation, while technical documentation for the same feature needs precision and completeness even if it results in longer text.

Understanding the tradeoffs between different translation approaches helps teams make informed decisions based on content type and business constraints. Following localization best practices 2026 means matching methodology to context rather than applying one approach universally.

Approach | Pros | Cons | Best for |

|---|---|---|---|

Full machine translation | Fastest, lowest cost, unlimited scale | Misses nuance, cultural context, brand voice | Initial drafts, internal docs |

Hybrid MTPE | Balanced speed and quality, cost effective | Requires skilled post-editors, some inconsistency | UI strings, help content, product descriptions |

Pure human translation | Highest quality, best cultural adaptation | Slowest, most expensive, limited scalability | Marketing copy, legal text, brand messaging |

Continuous localization integrated into CI/CD pipelines represents the cutting edge for software teams, automatically triggering translation workflows whenever developers commit new strings. This approach prevents the translation backlog that traditionally delays releases, ensuring localized versions ship simultaneously with source language updates. Recognizing why traditional localization tools are failing helps teams understand why continuous approaches outperform batch translation projects for agile development environments.

Pro Tip: Enforce glossary and style guide use early with QA automation to reduce costly rework, catching terminology inconsistencies before they propagate across your entire product.

Addressing linguistic and cultural challenges in team translations

Even with solid metrics and methodologies, product teams encounter specific linguistic and cultural obstacles that threaten translation accuracy. Text expansion is among the most common issues, with languages like German or Finnish producing strings 30-40% longer than English equivalents, breaking carefully designed UI layouts. Right-to-left scripts like Arabic and Hebrew require complete interface mirroring, affecting not just text direction but icon placement and visual hierarchy. Pluralization rules vary dramatically, with languages like Polish having multiple plural forms based on quantity ranges that English speakers never consider.

Idioms and culturally specific references pose subtler challenges that metrics alone won’t catch. A phrase that works perfectly in American English might confuse or offend users in other markets, even when translated literally with perfect grammar. Domain-specific terminology creates similar issues, as technical terms may have multiple valid translations depending on industry context, and choosing the wrong variant damages credibility with expert users. UI truncation happens when translated text exceeds available space, forcing awkward abbreviations or wrapping that disrupts the visual design product teams carefully crafted.

Semantic drift represents perhaps the most insidious accuracy problem, where technically correct translations fail to convey the intended meaning or emotional resonance. A literal translation might be grammatically perfect yet completely miss the point, leaving users confused about what action to take or what benefit to expect.

“Companies see user retention drop 25% in markets where translations are literally accurate but culturally tone-deaf, proving that translation accuracy must account for meaning and context, not just word-for-word correctness.”

Practical strategies help teams navigate these challenges systematically. Collaborate closely with linguistic experts who understand both the source and target cultures, not just the languages, ensuring translations preserve intent alongside literal meaning. Use pseudo-localization during development to test UI constraints before actual translation begins, replacing English strings with longer accented text to reveal layout problems early. Exploring examples of translation challenges in localization provides concrete scenarios teams can learn from and prepare for proactively.

Global UX localization strategy research confirms that text expansion, RTL layouts, plurals, cultural nuances, and domain terminology are frequent edge cases affecting accuracy, requiring deliberate design and workflow accommodations rather than afterthought fixes.

Pro Tip: Involve UX writers in localization early to ensure cultural meaning and usability align, preventing the disconnect between what developers build and what users actually need in each market.

Leveraging tools and workflows to streamline translation accuracy for teams

Modern tooling transforms translation accuracy from a manual quality control burden into an automated, collaborative workflow that scales with your product. Implementing efficient localization workflows requires deliberate steps that integrate quality checks throughout your development cycle rather than treating translation as a final pre-release task.

Select a Translation Management System (TMS) that integrates with your existing development tools, supporting API connections to your codebase, design files, and content repositories for seamless string extraction and updates.

Define comprehensive glossaries and style guides before beginning translation work, documenting terminology preferences, brand voice guidelines, and formatting standards that all translators and reviewers must follow consistently.

Integrate localization into your CI/CD pipeline so new or modified strings automatically trigger translation workflows, preventing backlogs and ensuring localized versions stay synchronized with source language releases.

Automate QA workflows to catch common errors like missing variables, broken formatting tags, inconsistent terminology, and length violations before human reviewers waste time on mechanical issues.

Establish feedback loops connecting customer support, user research, and localization teams so real-world usage problems inform continuous improvements to glossaries, style guides, and translation approaches.

Collaboration among cross-functional product teams makes or breaks localization success, as product managers prioritize markets and timelines, UX writers define voice and tone, developers implement technical constraints, and designers ensure visual coherence across languages. Translation accuracy isn’t about words, it’s about meaning, which means TMS with QA automation and ongoing feedback loops reduce rework by enforcing glossaries and style guides early, catching problems when they’re cheap to fix rather than after release.

Context-aware review environments allow translators and reviewers to see strings within the actual UI rather than in isolated spreadsheets, dramatically improving their ability to choose appropriate translations based on available space, surrounding elements, and user workflow. Early glossary enforcement through automated checks prevents terminology drift before it spreads across hundreds of strings, maintaining consistency that users notice and appreciate. Following the localization workflow guide 2026 helps teams implement these practices systematically rather than reactively.

Continuous improvement via metrics and team communication closes the loop, ensuring your localization process gets better with each release cycle. Regular retrospectives examining error patterns, turnaround bottlenecks, and user feedback help teams refine their approach based on evidence rather than assumptions.

Pro Tip: Use in-context translation review tools to catch errors before release, saving costly fixes later and preventing the user experience problems that damage retention in international markets.

Improve your team’s translation accuracy with Gleef

The strategies and insights in this guide become dramatically easier to implement with purpose-built tools designed for modern product teams. Gleef’s AI-powered Figma plugin empowers designers and product teams to maintain translation accuracy directly within the design environment, eliminating context loss that happens when strings move through disconnected systems. By integrating localization into the design phase rather than treating it as a development afterthought, teams catch layout issues, terminology problems, and cultural mismatches before they require expensive rework.

Gleef’s platform embodies the localization best practices 2026 that leading product teams rely on, combining semantic translation memory, glossary enforcement, and in-context editing to ensure consistency and accuracy across every release. The platform’s robust API supports the continuous localization workflows that prevent translation from becoming a release blocker, while collaboration features keep product managers, UX writers, developers, and designers aligned throughout the process. Explore how Gleef can transform your team’s localization workflow from a quality bottleneck into a competitive advantage that accelerates global product success.

FAQ

How can teams measure translation accuracy effectively?

Use KPIs like error rate, first-time quality, Time to Edit, and CSAT to measure both linguistic precision and user satisfaction across markets. Combine quantitative metrics with human review to assess whether translations achieve their intended meaning and emotional resonance, not just technical correctness. Track these measures consistently across releases to identify trends and validate process improvements.

What are the benefits of hybrid machine-human translation for product teams?

Hybrid MTPE saves 30-50% time versus full human translation while maintaining quality through human review of machine-generated drafts. This approach offers scalability for high-volume content like UI strings while preserving nuanced accuracy for user-facing text. Teams get faster turnaround without sacrificing the cultural adaptation and brand voice consistency that pure machine translation often misses.

How can teams handle cultural nuances and UI constraints effectively?

Test UI with pseudo-localization during development to foresee layout issues from text expansion before actual translation begins, allowing designers to build flexibility into layouts proactively. Engage cultural experts and UX writers during localization development rather than after the fact, ensuring translations adapt content beyond literal word replacement to retain intended meaning. Use in-context review tools so translators see how strings appear in the actual interface, enabling better decisions about brevity, formality, and terminology based on available space and user workflow.