Many product managers believe basic translation is sufficient for global software success. This assumption overlooks the complexity of translation quality standards, which encompass linguistic accuracy, cultural relevance, terminology consistency, and user experience optimization. Without proper standards, software localization efforts risk damaging brand reputation and user satisfaction across international markets. This guide clarifies what translation quality standards involve, why they matter for software products, and how to implement them effectively. You’ll learn measurement frameworks, common pitfalls, practical solutions, and workflow integration strategies that help localization professionals deliver consistent, high-quality translations at scale.

Key Takeaways

Point | Details |

|---|---|

Translation quality standards | Translation quality standards define the criteria and processes that ensure localized software delivers the same value, clarity, and user experience as the original version. |

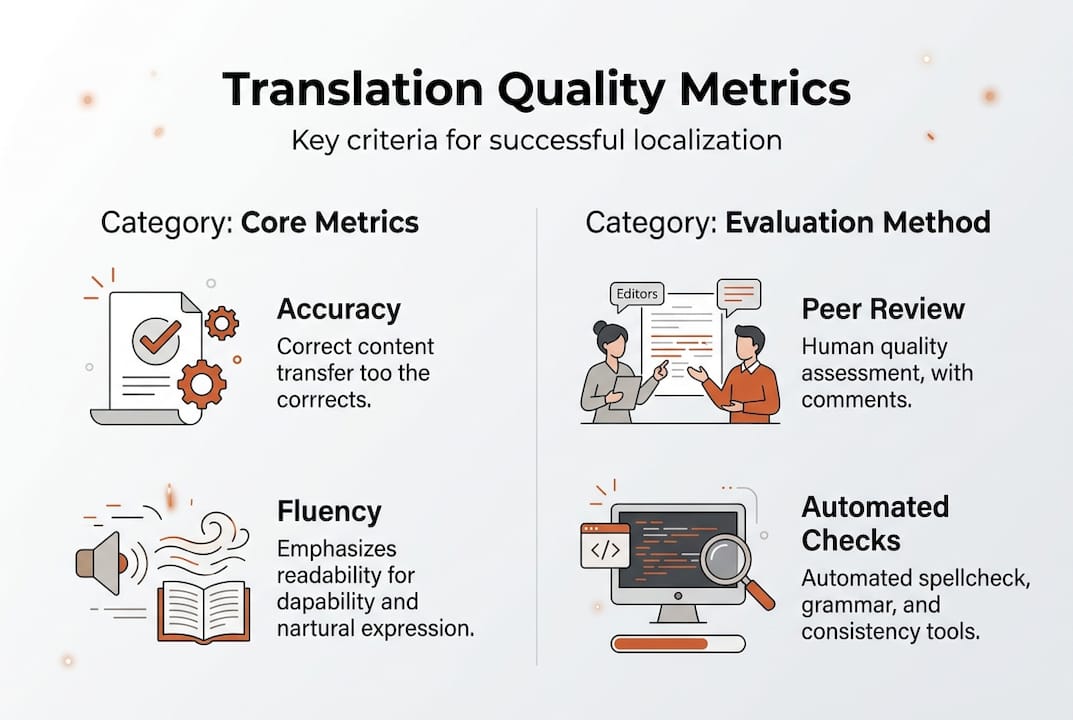

Quality metrics | Key metrics for evaluating translation quality include accuracy, fluency, consistency, completeness, and locale appropriateness. |

Measurement and tracking | Leading organizations track error rates, reviewer agreement scores, post release bug reports related to localization, and user feedback sentiment across language versions. |

Workflow integration | Balance linguistic accuracy with cultural relevance by involving native speakers in quality reviews and aligning standards with UI constraints and platform specific conventions. |

Understanding translation quality standards and their importance

Translation quality standards define the criteria and processes that ensure your localized software delivers the same value, clarity, and user experience as the original version. These standards go beyond word-for-word accuracy to encompass cultural appropriateness, brand voice consistency, technical terminology precision, and contextual relevance. For software products, quality standards must address UI text constraints, variable handling, formatting requirements, and platform-specific conventions that differ across languages and regions.

Without standardized quality controls, localization teams face cascading problems. Inconsistent terminology confuses users and undermines product credibility. Culturally inappropriate translations alienate target audiences and damage brand perception. Technical errors in localized UI strings break functionality or create usability issues. These translation challenges compound as you scale to more languages, making quality issues exponentially harder to fix retroactively.

Quality standards directly impact customer satisfaction and business outcomes. Users encountering poorly translated interfaces abandon products faster, leave negative reviews, and generate higher support ticket volumes. Research shows that 75% of consumers prefer purchasing products in their native language, but only when translations feel natural and trustworthy. Your localization quality becomes a competitive differentiator in crowded global markets.

Key metrics for evaluating translation quality include accuracy (correct meaning transfer), fluency (natural-sounding target language), consistency (uniform terminology and style), completeness (no missing content), and locale-appropriateness (cultural and regional relevance). Leading organizations track error rates, reviewer agreement scores, post-release bug reports related to localization, and user feedback sentiment across language versions.

Pro Tip: Balance linguistic accuracy with cultural relevance by involving native speakers from your target markets in quality reviews, not just professional translators unfamiliar with your product domain.

Key components and metrics to measure translation quality

Effective translation quality measurement requires understanding five core components that work together to create successful localized software. Accuracy ensures the translated content conveys the exact meaning, intent, and nuance of the source material without additions, omissions, or distortions. Fluency evaluates whether translations read naturally in the target language, following grammatical conventions and idiomatic expressions native speakers expect. Consistency maintains uniform terminology, style, and voice across all translated content, creating a cohesive user experience.

Terminology management focuses specifically on technical terms, product names, feature labels, and domain-specific vocabulary that must translate identically every time they appear. Cultural appropriateness assesses whether translations respect local customs, avoid offensive content, and adapt examples, metaphors, and references to resonate with target audiences. These quality evaluation metrics provide objective frameworks for assessing localization effectiveness.

Quality Metric | Definition | Measurement Method |

|---|---|---|

Accuracy Score | Percentage of correctly translated meaning units | Human review with error categorization |

Fluency Rating | Natural language quality on 1-5 scale | Native speaker evaluation |

Terminology Consistency | Percentage of terms matching approved glossary | Automated terminology checker |

Error Density | Number of errors per 1000 words | Combined automated and manual QA |

Cultural Adaptation Index | Appropriateness rating for local context | Market-specific reviewer assessment |

Automated quality checks identify many issues quickly, including spelling errors, broken variables, formatting inconsistencies, length violations, and terminology mismatches against approved glossaries. These tools scan translations in seconds, flagging potential problems for human review. However, automated systems cannot fully assess meaning accuracy, cultural nuance, or contextual appropriateness.

Human review remains essential for evaluating subjective quality dimensions. Professional reviewers assess whether translations sound natural, convey appropriate tone, and work within your product’s specific context. Combining automated and human approaches creates comprehensive quality assurance:

Automated spell checkers and grammar validators catch basic errors

Translation memory systems ensure consistency across related content

Terminology management tools enforce approved vocabulary

AI-powered quality estimation predicts potential issues before human review

Native speaker evaluations provide final validation of cultural appropriateness

In-context review tools let reviewers see translations within actual UI layouts

Challenges and solutions when implementing translation quality standards

Software companies encounter predictable obstacles when establishing and maintaining translation quality standards across growing product portfolios and expanding language sets. Inconsistent terminology represents the most common challenge, occurring when different translators use varying terms for the same concept across product areas, documentation, and marketing materials. Users notice these inconsistencies immediately, perceiving the product as unpolished or unreliable.

Manual translation processes introduce human errors that multiply as content volume increases. Translators working in isolated documents miss context from related screens, leading to translations that technically accurate but functionally inappropriate. Copy-paste mistakes, variable handling errors, and formatting problems slip through when quality checks rely solely on human attention across thousands of strings.

Scaling quality standards across multiple languages creates exponential complexity. Managing terminology consistency becomes nearly impossible when tracking approved translations across 20+ languages using spreadsheets or disconnected tools. Review cycles stretch longer as each language requires separate validation, delaying product releases and frustrating development teams.

Traditional localization tools struggle with modern software development workflows, forcing context switching between design tools, code repositories, translation platforms, and review environments. This fragmentation breaks the feedback loop between designers, developers, and translators, allowing quality issues to persist undetected until late in the release cycle.

Modern solutions address these challenges through integrated, AI-enhanced approaches:

Centralized terminology databases automatically flag deviations across all content

AI-powered translation suggestions maintain consistency while adapting to context

Automated quality checks run continuously, catching errors before human review

In-context editing tools let translators see exactly how text appears in actual UI

Semantic translation memory recognizes similar meanings, not just identical strings

API integrations connect localization directly to development workflows

Real-time collaboration features enable immediate feedback between team members

Pro Tip: Integrate localization quality checks into your continuous integration pipeline, treating translation errors with the same priority as code bugs to catch issues before they reach production.

Best practices for integrating and maintaining translation quality standards in localization workflows

Successfully embedding translation quality standards into daily operations requires systematic workflow design that makes quality assurance automatic rather than optional. Follow these steps to standardize your localization process:

Establish comprehensive style guides defining tone, formality level, terminology preferences, and formatting conventions for each target language and market.

Create and maintain centralized glossaries with approved translations for all product-specific terms, feature names, and technical vocabulary.

Select integrated tools that connect design, development, and localization environments, eliminating context switching and manual file transfers.

Implement automated quality gates that prevent low-quality translations from advancing through your workflow without explicit override.

Train all team members on quality standards, tool usage, and collaboration processes, ensuring designers and developers understand localization requirements.

Schedule regular quality audits reviewing random samples across languages to identify systematic issues and improvement opportunities.

Collect and analyze user feedback from each market, using real-world usage data to refine quality standards and catch issues automated tools miss.

Document lessons learned from quality failures, updating standards and processes to prevent recurrence.

Workflow Aspect | Manual Approach | AI-Enhanced Approach | Quality Impact |

|---|---|---|---|

Initial translation | Human translator working from source files | AI suggestions with human review and editing | 40% faster with maintained accuracy |

Terminology consistency | Manual glossary lookup and application | Automatic flagging of non-standard terms | 85% reduction in terminology errors |

Context understanding | Translators guess from string IDs and comments | In-context preview showing actual UI placement | 60% fewer contextual mismatches |

Quality review | Sequential human review of all content | AI pre-screening flags issues for human focus | 50% reduction in review time |

Update management | Manual tracking of changed strings | Automated identification and translation memory leverage | 70% faster update cycles |

Continuous monitoring ensures standards remain effective as your product and markets evolve. Track quality metrics over time, identifying trends that indicate process improvements or emerging problems. Modern localization workflows combine AI automation with human expertise, letting technology handle repetitive quality checks while people focus on nuanced cultural adaptation and creative problem-solving.

Collaboration between product managers and localization professionals strengthens quality outcomes significantly. Product managers provide critical context about feature intent, user workflows, and business priorities that help translators make better decisions. Localization experts educate product teams about linguistic constraints, cultural considerations, and market-specific requirements that influence design and content strategy. Regular communication prevents late-stage surprises and builds mutual understanding that improves quality across all languages.

Enhance your translation quality with Gleef localization tools

Maintaining rigorous translation quality standards becomes significantly easier when your tools actively support quality assurance rather than creating friction. Gleef’s AI-powered localization platform embeds quality controls directly into your existing workflows, eliminating the context switching and manual processes that allow errors to slip through.

The Gleef Figma plugin lets designers and product managers manage translations without leaving their design environment, ensuring translators see exact UI context for every string. Semantic translation memory automatically suggests consistent translations while adapting to different contexts, and built-in glossary enforcement flags terminology deviations before they reach production.

For development teams, Gleef CLI tools integrate localization quality checks into continuous integration pipelines, treating translation errors with the same rigor as code bugs. Automated validation catches formatting issues, variable problems, and length violations immediately, while AI-powered quality estimation identifies potentially problematic translations for human review before deployment.

Pro Tip: Implementing integrated localization tools like Gleef reduces manual quality assurance overhead by up to 60%, letting your team focus on strategic localization decisions rather than repetitive error checking.

Frequently asked questions

What are translation quality standards?

Translation quality standards are structured criteria and processes that ensure localized content maintains accuracy, consistency, cultural appropriateness, and user experience quality across all target languages. These standards define acceptable error rates, terminology requirements, style guidelines, and review procedures that localization teams must follow. They serve as measurable benchmarks for evaluating translation effectiveness and maintaining brand integrity in global markets.

How can AI improve translation quality in localization?

AI automates repetitive quality checks like spelling validation, terminology consistency enforcement, and formatting verification, catching errors that humans might miss during manual review. Machine learning models identify patterns in high-quality translations, suggesting improvements and flagging potential issues before human reviewers see content. AI-powered localization tools accelerate workflows while maintaining quality standards across dozens of languages simultaneously, making comprehensive quality assurance economically feasible at scale.

What common mistakes reduce translation quality?

Ignoring cultural context creates translations that are technically accurate but culturally inappropriate or offensive to target audiences. Inconsistent terminology across product areas confuses users and undermines credibility, while inadequate review processes allow errors to reach production. Relying exclusively on manual workflows increases error rates and makes scaling impossible, as human attention cannot maintain consistent quality across thousands of strings in multiple languages.

Which tools help maintain translation quality standards?

Computer-assisted translation (CAT) tools provide translation memory and terminology management that enforce consistency across projects. Automated quality assurance software identifies formatting errors, terminology violations, and technical issues before human review. AI-assisted review platforms predict quality scores and flag potentially problematic translations for focused attention. Integration with design tools and developer environments ensures translators work with full context, dramatically reducing errors caused by misunderstanding string usage or UI constraints.