TL;DR:

Rules-based machine translation provides control and consistency for product localization.

Hybrid systems combining RBMT and NMT outperform pure neural translation in terminology-sensitive tasks.

Measuring success through terminology accuracy and rework reduction is more effective than BLEU scores.

Most product teams assume rules-based machine translation (RBMT) is a relic from the pre-AI era, something you only read about in academic papers. That assumption is costing them. Research on hybrid translation systems confirms that hybrid approaches outperform pure neural machine translation (NMT) on specialized, high-precision tasks, the exact kind of work that defines product localization. This guide breaks down what RBMT actually is, where it fits in your localization workflow, and how to use it to build translation consistency into your product from day one.

Key Takeaways

Point | Details |

|---|---|

RBMT ensures consistency | Rules-based translation keeps terminology and messaging stable across your product. |

Hybrid models outperform in niches | Combining rules-based and neural translation improves precision for specialized tasks. |

Choose the right fit | RBMT dominates when consistency matters, while NMT works for creative, unstructured content. |

Metrics beyond BLEU | Product teams should measure translation by consistency and user satisfaction, not just automated scores. |

What is rules-based translation?

To clear up what rules-based translation actually is, let’s start with how it works and what sets it apart from the neural systems you may already be using.

Rules-based machine translation (RBMT) is exactly what it sounds like. Instead of learning patterns from massive datasets, it follows a set of explicit, human-defined linguistic rules, including grammar instructions, dictionaries, and morphological (word structure) guidelines, to convert text from one language to another. Think of it as a meticulously written rulebook for language. Every output is traceable. Every decision follows a defined path.

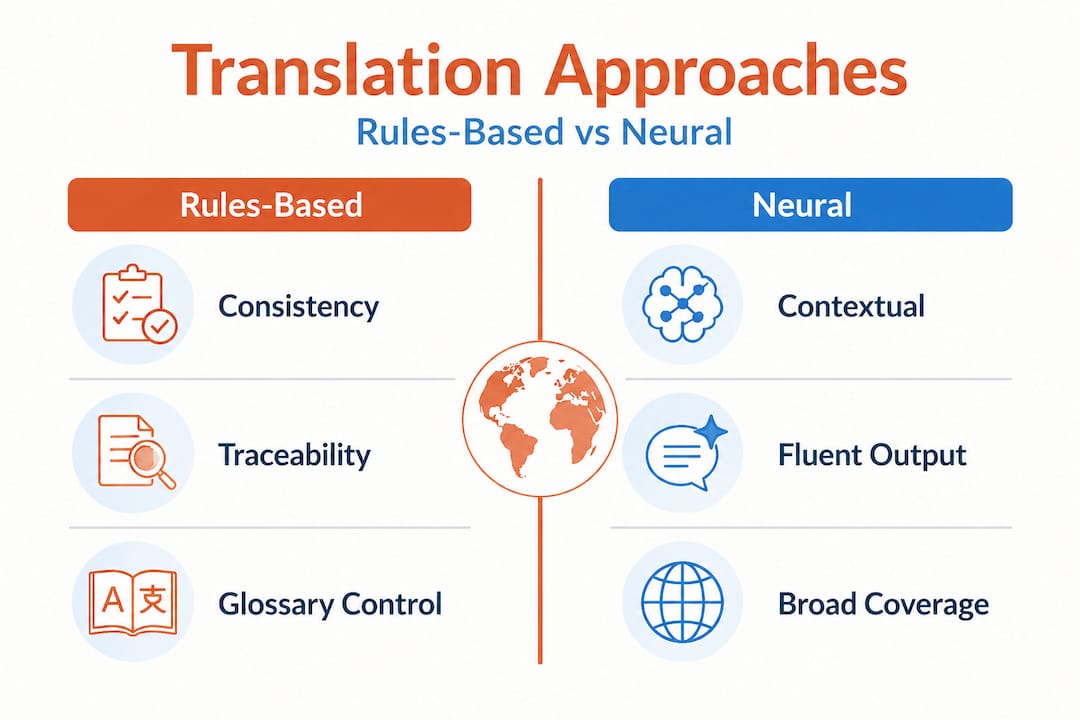

This is fundamentally different from neural machine translation (NMT), which trains on billions of examples and produces outputs that can sometimes feel fluent but are difficult to audit or control. NMT is powerful for creative content, where style and tone matter more than precise terminology. RBMT is powerful when you need predictability and control, which is almost always the case for digital product strings.

According to established RBMT system taxonomy, rules-based translation comes in three distinct types:

Direct translation: The simplest approach. Words are translated one-to-one using a bilingual dictionary, with minimal analysis of sentence structure. Fast, but limited in handling grammatical complexity.

Transfer-based translation: This approach analyzes the grammatical structure of the source language, applies transformation rules, and then generates the target language. It accounts for how sentence structure differs between languages.

Interlingual translation: The most sophisticated RBMT method. Source text is converted into a language-neutral abstract representation (the interlingua), and the target language is generated from that. This makes it more adaptable across multiple language pairs.

Here is a quick comparison to help you orient:

Type | Analyzes structure | Supports multiple languages | Best for |

|---|---|---|---|

Direct | Minimal | Limited | Simple, repetitive strings |

Transfer-based | Yes (source/target) | Moderate | Product UI, structured content |

Interlingual | Yes (abstract) | High | Multi-language platforms |

For deeper context on rules-based translation systems, the semantic foundations matter as much as the mechanics. The key insight is this: RBMT gives you control. And in product localization, control is often more valuable than fluency.

How rules-based translation drives consistency in localization

Now that we know what RBMT is, let’s see how it solves one of the hardest localization challenges: keeping meaning and terminology consistent across your entire product.

Imagine you ship a product in eight languages. Your English UI uses the word “workspace” in 40 different places. Without strict rules, translators (human or neural) might render that term differently across screens, contextually adapting it in ways that feel natural in isolation but create a jarring, inconsistent experience when a user navigates the full product. This is not a hypothetical. It happens constantly, and it silently erodes user trust.

RBMT prevents this by design. When you define “workspace” in your translation ruleset and bind it to a specific target-language equivalent, that mapping holds everywhere. Every button label, tooltip, error message, and onboarding screen reflects the same term. No variance. No guessing. No expensive review cycles to catch drift.

Research on hybrid RBMT and NMT systems shows that combining rules-based logic with neural models outperforms end-to-end NMT specifically in terminology-sensitive tasks. This is significant for product teams. It means you do not have to choose between quality and control. A hybrid system lets you encode your critical terms with rules while allowing the neural layer to handle natural language flow around them.

Here are the product areas where terminology consistency is most critical:

UI strings: Button labels, menu items, navigation links, and modal headers must be uniform across every screen and platform.

Compliance and legal messaging: Terms like “terms of service,” “data processor,” or “consent” carry regulatory weight. Inconsistent translation is a legal liability.

Onboarding tutorials: New users build their mental model of your product through onboarding. Inconsistent terms here cause confusion that increases support ticket volume.

Error messages: Users under stress read error messages carefully. A term shift between an error message and the help documentation breaks trust immediately.

In-app notifications: These strings appear out of context. They rely entirely on the consistency of the terms your users already know.

The impact of localization extends far beyond swapping words from one language to another. When content consistency in localization is enforced at the rules level, you reduce reviewer workload, accelerate release cycles, and produce a product that feels native to each market rather than translated.

Pro Tip: Maintain a curated glossary of 50 to 100 critical product terms and bind them to your RBMT rules engine. For regulated industries like fintech or healthtech, this is not optional. It is your first line of defense against costly compliance errors.

When to choose rules-based translation over neural approaches

Understanding when RBMT shines (and when it doesn’t) is crucial. Let’s compare it with more modern neural systems so you can pick the right tool for each job.

The instinct in most product teams is to reach for the newest, most capable neural model and apply it everywhere. That is understandable, neural translation has made stunning progress. But it is also how you end up with a beautifully fluent product that still uses three different words for the same core feature in the same language. Fluency is not the same as precision. For product teams, translation quality standards must account for both.

Modern MT evaluation frameworks, including WMT benchmarks, show that constrained and hybrid systems remain competitive with large language models on precision-critical tasks. This should recalibrate how you think about “best” translation: best for what context?

Here is a direct comparison to guide your decision:

Approach | Strengths | Weaknesses | Best use cases |

|---|---|---|---|

RBMT | Full control, traceable, consistent | Requires expert rule setup, less fluent | UI strings, legal text, regulated content |

Hybrid (RBMT + NMT) | Consistent terms + natural language flow | More complex to configure | Product apps, SaaS platforms, onboarding |

Pure NMT | High fluency, handles creative content | Inconsistent terminology, hard to audit | Marketing copy, blog content, support docs |

To decide which approach fits your workflow, work through these steps:

Audit your string types. Separate UI strings and structured content from freeform content like blog posts or emails. They require different approaches.

Identify your critical terms. List the product terms that must never vary: feature names, action labels, status indicators. These are your RBMT candidates.

Assess your regulatory environment. If you operate in finance, healthcare, legal, or government markets, RBMT or hybrid is not optional for those content types.

Map your review capacity. RBMT reduces post-edit volume for structured content. If your team is stretched thin on QA, RBMT pays for itself quickly.

Pilot a hybrid approach. Start with your 20 most-used UI strings under RBMT rules, run them through a hybrid pipeline, and measure consistency against a pure NMT baseline.

When consistency is more important than fluency, RBMT or hybrid is often the better choice. For product teams shipping to regulated markets or managing complex UX copy, this is almost always the right call.

The most effective localization strategies do not pick one approach and apply it rigidly. They map translation technology trends to content type and match the tool to the task. The product strings that define your user’s daily experience deserve the precision of rules. The marketing copy that brings them in? Let the neural model do its work.

You can also explore quality metrics for translation to understand how to benchmark your choices before you commit to a system at scale.

Measuring rules-based translation success in digital product teams

Choosing RBMT is only part of the solution. You need to know what “good” looks like for your product team, and standard MT metrics often give you the wrong answer.

BLEU scores (Bilingual Evaluation Understudy) and COMET scores are the most commonly cited metrics in machine translation evaluation. They measure how closely a machine translation matches human reference translations. For marketing content or literary translation, they are reasonable proxies for quality. For product localization with RBMT, they can actively mislead you.

Here is why. RBMT may produce translations that score lower on BLEU because they prioritize term consistency over surface-level fluency. A BLEU score might penalize a consistent but slightly less idiomatic translation even though that translation is exactly right for your product context. Research confirms that measuring success via consistency metrics is more meaningful than BLEU or COMET for digital product teams. This is a fundamental shift in how you should define localization quality.

“For digital products, measure success via consistency metrics over BLEU or COMET.”

So what should you measure instead? Here is a practical checklist for product teams:

Terminology hit rate: What percentage of defined glossary terms are translated correctly and consistently across all strings? Target 98% or higher.

Rework rate: How many translated strings require manual post-editing per release cycle? Track this over time. RBMT should drive it down.

Reviewer satisfaction score: Ask your localization reviewers to rate output quality on a 1 to 5 scale per language per release. This captures the nuance that automated metrics miss.

Cross-screen consistency audits: Randomly sample 10 to 20 screens per language per release and check whether key terms match across different product areas.

Support ticket volume by locale: An increase in support contacts from a specific locale after a release often signals a localization quality issue. This is a lagging but powerful real-world indicator.

Time to approve: Measure how long it takes your localization reviewers to approve translated content. Shorter review cycles indicate cleaner output.

These metrics give you a feedback loop that actually reflects the quality your users experience. They also help you make the case internally for investing in structured translation infrastructure. Numbers like “rework reduced by 40%” or “reviewer approval time cut in half” resonate with product leaders far more than a BLEU score improvement.

A practical perspective: Why most teams undervalue structured translation

Here is where many teams miss the point entirely, and it is worth being direct about it.

The current enthusiasm around large language models and neural translation is understandable. The outputs are often impressive. The demos are compelling. But product teams chasing the neural buzz are frequently ignoring a set of hidden costs that accumulate quietly: hours of rework when terminology drifts, user trust eroding from inconsistent labels, review cycles that balloon before every release, and the real risk of compliance failures in regulated markets.

The irony is that RBMT is often dismissed as “old technology” by the same teams who are manually writing glossary exceptions and style guide notes to correct their neural model’s inconsistencies. They are doing the work of a rules system by hand, without the scalability of one.

What separates effective localization programs from chaotic ones is not sophistication of the neural model. It is traceability. When a term is wrong in six languages across 200 strings, you need to know why it happened and fix it in one place. Rules-based systems give you that. Neural systems, by themselves, do not.

There is also a cultural factor. Engineers often own the localization stack, which means rule definition and glossary maintenance can feel like “not their problem.” But the UX writers and product managers who actually know what “workspace,” “pipeline,” or “integration” means in context are the exact people who should be defining those rules. When those teams get involved in building the RBMT glossary, translation quality jumps immediately because the people who understand the product are now encoding their knowledge directly into the system.

You can see real localization challenge examples that illustrate exactly this dynamic: organizations that brought product writers into the localization infrastructure saw faster releases, fewer review escalations, and measurably higher user satisfaction in new markets. Structured translation is not boring. It is the foundation that makes everything else work.

Take rules-based translation further with Gleef

Ready to put rules-based translation into practice? Here is how to make it effortless in your product development workflow.

Gleef is built for product teams who want translation consistency without the manual overhead of managing it across spreadsheets, review tools, and disconnected pipelines. The platform’s glossary and content consistency features let you encode your critical terminology directly into your localization workflow, so your RBMT rules travel with your content, not against it.

With the Gleef Figma Plugin, your product designers and UX writers can manage translations in context, inside the tools they already use, without switching platforms. Hybrid translation logic, including rules-based enforcement for key terms and AI-powered fluency for surrounding content, is integrated into the workflow. Your team ships faster, reviewers spend less time correcting drift, and your product arrives in every market with a consistent, trustworthy voice.

Frequently asked questions

What are the main types of rules-based translation?

There are three distinct types, direct, transfer-based, and interlingual, differentiated by how much language structure they analyze and how abstract their intermediate representations are.

When should product teams choose rules-based over neural translation?

Choose rules-based for high-consistency tasks like UI texts or regulated terms. Constrained and hybrid systems consistently outperform pure neural models on precision-critical localization tasks, while neural excels in creative, freeform content.

How do you measure rules-based translation quality?

Success is best tracked with terminology hit rate, reduction in manual edits, and reviewer satisfaction scores. Research confirms that consistency metrics outperform BLEU or COMET as quality indicators for digital product translation.

Can rules-based translation be combined with AI approaches?

Yes, and it often should be. Hybrid RBMT and NMT systems blend rule-enforced terminology with neural fluency, delivering better results in specialized product localization than either approach alone.