Machine translation is fast, impressively so. But speed without quality is just noise delivered efficiently. Many product teams ship localized features assuming that if the words are technically correct, the job is done. They’re not wrong to trust automation, but they’re missing something critical: translation quality is far more than word-for-word accuracy. It encompasses fluency, terminology consistency, style adherence, locale conventions, and cultural verity. This guide breaks down what translation quality actually means for digital products, how to measure it with real frameworks, where automated tools fall short, and how to build a quality playbook your team can own.

Key Takeaways

Point | Details |

|---|---|

Quality is multi-dimensional | True translation quality covers accuracy, fluency, style, terminology, and context. |

No single metric fits all | Teams should select or tailor evaluation methods based on their specific product goals. |

Automation needs oversight | AI and automated checks are powerful but require human review to catch nuanced issues. |

Ongoing reviews matter most | Continuous audits and process improvement are key to translation quality success. |

What translation quality means for tech products

Literal accuracy is table stakes. For digital products, it’s not enough to translate words correctly if the result sounds robotic, misses your brand voice, or confuses users in a target market. A button that reads “Click here to proceed” in English might translate literally into German but feel stiff and unnatural to native speakers. That friction erodes trust, and trust is your product’s most valuable currency.

Translation quality standards for tech products cover six interconnected dimensions:

Accuracy: Does the translation convey the exact meaning of the source?

Fluency: Does it read naturally in the target language?

Terminology: Are domain-specific terms used consistently across the product?

Style: Does the tone match your brand voice and UX writing guidelines?

Locale: Are date formats, currencies, and cultural references adapted correctly?

Verity: Is the translation truthful and free from misleading implications?

These six dimensions come directly from the MQM framework, which stands for Multidimensional Quality Metrics, the most widely adopted standard for professional translation error analysis.

“Translation quality is the degree to which a translation fulfills agreed specifications, encompassing accuracy, fluency, terminology consistency, style adherence, locale conventions, and verity.”

When any one of these dimensions breaks down, the user experience suffers. A terminology inconsistency in a SaaS dashboard, where “workspace” becomes “area” in one screen and “zone” in another, creates cognitive friction. A style failure in a push notification can make your product feel like it was built by a committee rather than a team that cares. These aren’t minor annoyances. They’re brand perception problems at scale.

Core frameworks and real-world metrics

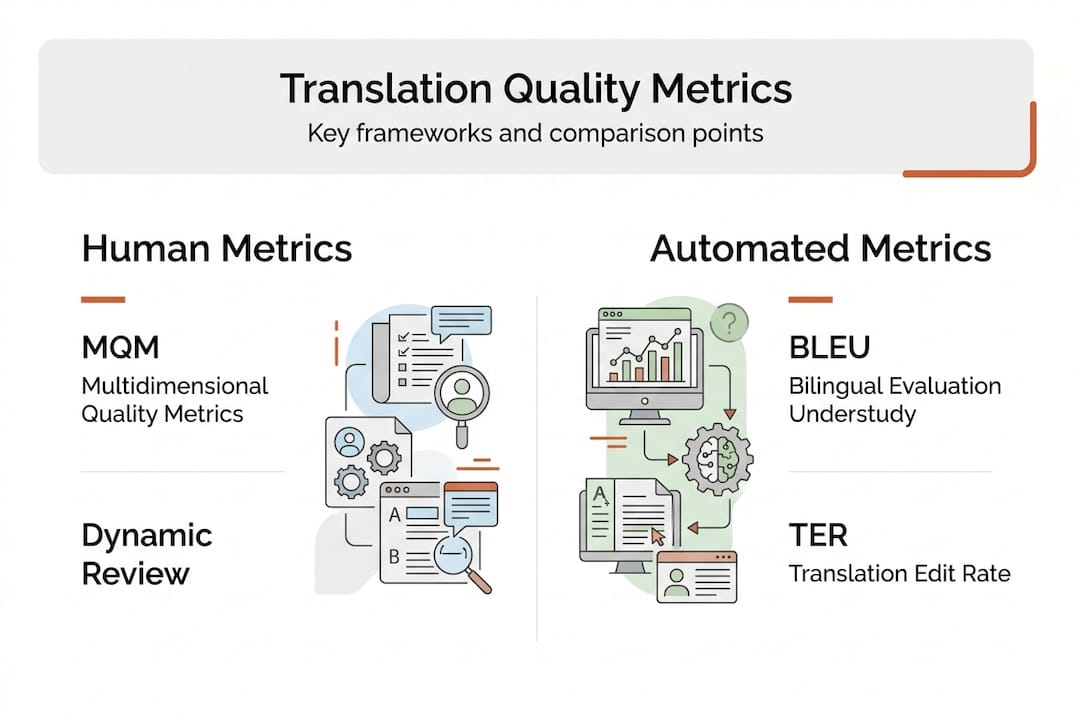

Understanding the components of quality sets the stage for how teams can actually measure and benchmark translations. Two frameworks dominate the conversation: MQM and BLEU.

MQM (Multidimensional Quality Metrics) is a hierarchical error taxonomy. It categorizes errors by type (accuracy, fluency, terminology, etc.) and assigns severity weights (minor, major, critical). This gives teams a structured, auditable way to score translations and hold vendors accountable. It’s the gold standard for professional localization.

BLEU (Bilingual Evaluation Understudy) is an automated metric that compares machine output against reference translations using n-gram overlap. It’s fast and cheap, but MQM outperforms BLEU for real-world quality assessment because BLEU correlates poorly with human judgment, especially for nuanced UX copy.

Here’s a practical comparison of the main evaluation approaches:

Framework | Type | Strengths | Weaknesses | Best for |

|---|---|---|---|---|

MQM | Human/hybrid | Detailed, auditable, severity-weighted | Time-intensive | Launch, critical markets |

BLEU | Automated | Fast, scalable, cheap | Misses nuance, tone, context | Bulk MT screening |

LQA | Human | Holistic, brand-aware | Slow, costly | High-stakes content |

AI-driven QE | Automated/hybrid | Scalable, improving fast | Still misses edge cases | Ongoing QA pipelines |

For most product teams, the smartest approach combines human-based and automated metrics. Use BLEU or AI quality estimation (QE) to flag obvious errors at scale, then route flagged segments to human reviewers for final judgment.

Here’s a practical sequence for implementing metrics in your workflow:

Define your quality threshold before translation starts. What MQM error rate is acceptable for your product tier?

Run automated screening (BLEU or AI QE) on all translated content to catch surface-level issues fast.

Apply MQM scoring to high-priority segments: onboarding flows, error messages, legal copy.

Review flagged segments with a human linguist or native-speaking UX writer.

Document and iterate by feeding error patterns back into your glossary and style guide.

Pro Tip: Don’t apply the same quality threshold to every string. A tooltip and a terms-of-service clause are not equal. Tier your content by risk level and allocate review resources accordingly.

Exploring translation challenges across different language pairs will also sharpen your instincts for where automated tools are most likely to stumble.

Pitfalls, edge cases, and machine translation limitations

Once teams understand the metrics, it’s crucial to call out real-world pitfalls, especially for tech products leaning on automation. Machine translation has improved dramatically, but it still struggles with deep linguistic and cultural understanding.

Common failure modes include:

Cultural tone-deafness: A phrase that’s casual and friendly in English can sound dismissive or even offensive in Japanese or Arabic.

Ambiguity: “Book the meeting” could mean schedule it or cancel it depending on context. MT often picks the wrong interpretation.

Domain-specific jargon: Terms like “pipeline,” “sprint,” or “token” have very different meanings in tech versus general language. MT frequently mistranslates them.

Word order failures: Languages like German or Japanese have fundamentally different sentence structures. Literal word-order mapping produces unreadable output.

Non-standard input: Abbreviations, emojis, placeholders, and dynamic variables in UI strings confuse MT engines and produce broken output.

Edge cases in MT include cultural tone-deafness, ambiguity, jargon, word order issues, and non-standard input, and even top-tier systems struggle with deep contextual understanding.

The “just run it through MT” approach backfires most painfully in two scenarios. First, when you’re entering a new market where cultural nuance is a competitive differentiator. Second, when your product handles sensitive content like health data, financial information, or legal agreements. In those cases, a mistranslation isn’t just a UX problem. It’s a liability.

Pro Tip: Always test MT output with native speakers before launch, even if it’s just a five-minute review of your top 20 most-viewed strings. The localization challenges you catch in that review will save you from embarrassing rollbacks.

For teams serious about verifying translation accuracy, building a structured review checklist for MT output is a non-negotiable step, not an optional polish.

Human, hybrid, and automated evaluation: When and how to use each

Having seen the pitfalls, teams need clear guidance on how to practically structure translation quality assurance for different contexts. The answer isn’t “always use humans” or “always automate.” It’s about matching the evaluation method to the risk level and scale of the content.

Hybrid workflows that combine AI quality estimation with human review catch up to 88% of errors while enabling significant automation, making them the practical sweet spot for most product teams.

Here’s when each approach fits best:

Full human review: New market launches, major product updates, legal or compliance copy, high-visibility marketing strings. Human evaluation remains the gold standard for content where errors carry real consequences.

Hybrid (AI QE + human): Ongoing product updates, feature releases, support content. AI flags likely errors; humans confirm and correct. This scales without sacrificing coverage.

Full automation: Internal tools, low-visibility strings, rapid iteration cycles where speed matters more than perfection. Acceptable when errors are low-risk and easily corrected post-launch.

Workflow type | Error catch rate | Typical tech stack |

|---|---|---|

Full human review | Up to 95% | TMS + human linguists |

Hybrid (AI QE + human) | Up to 88% | MT + QE tools + reviewer |

Full automation | 60-75% | MT + BLEU/automated QE |

The key insight here is that AI translation quality tools are not a replacement for human judgment. They’re a force multiplier. Use them to handle volume, and reserve human expertise for the decisions that actually move the needle on user experience.

Building a translation quality playbook for your team

After covering evaluation tactics, the final step is empowering your team to build a structured quality system you can own and continuously improve. A playbook isn’t a one-time document. It’s a living process.

Define quality specifications upfront. Before any translation work begins, document your expectations: acceptable error rates by content tier, required terminology sources, style guide references, and locale-specific rules.

Integrate quality checks into your agile workflow. Translation review should be a sprint task, not an afterthought. Assign ownership, set acceptance criteria, and treat localization bugs with the same urgency as code bugs.

Build and maintain a glossary. Consistent terminology is one of the highest-leverage investments you can make. A shared glossary prevents the “workspace vs. zone” problem before it starts.

Audit regularly. Schedule quarterly reviews of your translated content, especially for high-traffic screens and onboarding flows. Markets evolve, language evolves, and your product evolves.

Hold vendors accountable with data. Use MQM scores and error logs to give structured feedback to translation vendors or freelancers. Vague feedback produces vague improvements.

No universal metric fits all; teams should tailor processes, audit regularly, and ensure vendor accountability.

Tailoring your metrics to your content type matters enormously. UX copy needs fluency and style checks. Legal copy needs accuracy and verity checks. Marketing copy needs cultural resonance checks. A one-size-fits-all approach will always leave gaps.

For a deeper operational framework, the translation guide for product teams covers end-to-end workflows, and localization best practices will help you align your quality process with broader product strategy.

How Gleef tools help you raise translation quality

Everything covered in this guide, from MQM scoring to hybrid review workflows to glossary management, becomes dramatically easier when your tooling is built for it. Gleef is designed specifically for product teams who need translation quality at scale without the operational overhead.

The Gleef Figma plugin lets designers and UX writers review translations in context, directly inside their design files, so style and layout issues surface before they reach production. The Gleef CLI integrates quality checks into your development pipeline, catching errors at the string level before they ship. And Gleef Studio gives your entire team a centralized workspace for glossaries, translation memory, and in-context editing. Together, these tools turn your quality playbook from a document into a living, automated system.

Frequently asked questions

How is translation quality measured in software localization?

Most teams use frameworks like MQM with severity weighting or hybrid human-AI evaluation to assess accuracy, fluency, and other dimensions objectively. Automated tools like BLEU are useful for screening but should not be the sole measure.

What are the most common translation quality pitfalls in digital products?

Cultural missteps, ambiguity, and domain-specific language are leading pitfalls, especially with machine translation. Edge cases like tone-deafness and jargon consistently trip up even advanced MT systems.

Is BLEU score enough to guarantee quality translations?

No. BLEU correlates poorly with human judgment and misses critical errors in tone, terminology, and cultural fit. MQM or human review provides a far more reliable quality signal.

How can product teams ensure ongoing translation quality?

Define quality specs by content tier, audit translated content regularly, and use structured frameworks like MQM for vendor accountability. Treat localization quality as a continuous process, not a one-time checkpoint.